Watermarks, Adversarial Defenses & Transferable Attacks

A unified game-theoretic framework connecting watermarking, adversarial robustness, and transferable attacks via interactive protocols.

As AI systems become more pervasive, the question of when they can be secured — and when they fundamentally cannot — becomes critical. This project develops a unified theoretical framework for watermarking, adversarial defenses, and transferable attacks.

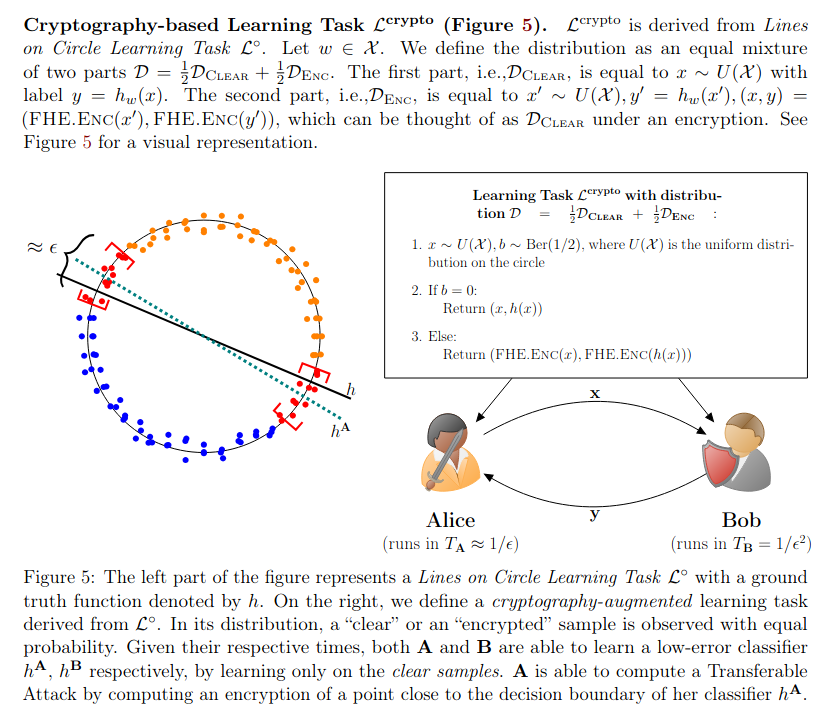

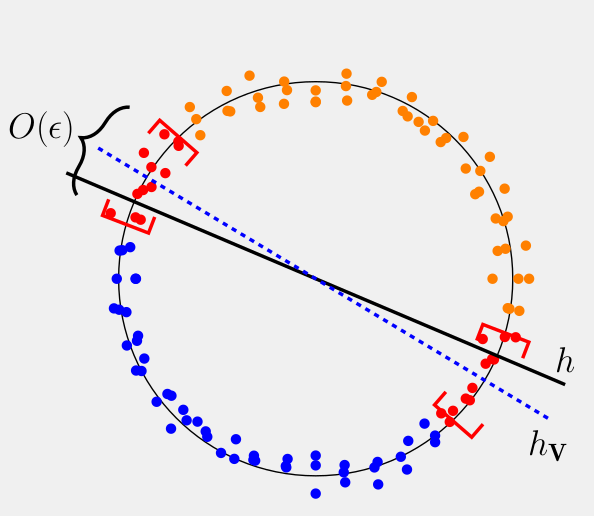

We model each scheme as an interactive protocol between two time-bounded players. Our main result shows that for almost every learning task, at least one of three schemes must exist: a watermark, an adversarial defense, or a transferable attack. The “almost every” qualifier is key — we also identify a third, counterintuitive regime where neither watermarks nor defenses exist, but efficient transferable attacks do. We prove this via a construction using homomorphic encryption, establishing a formal equivalence between transferable attacks and cryptographic hardness.

Papers: