Berkant Turan

ML Researcher @ IOL Lab at ZIB | PhD Candidate @ TU Berlin | Member @ Berlin Mathematical School

I am a fourth-year PhD candidate at TU Berlin, working with Sebastian Pokutta in the IOL group at Zuse Institute Berlin. I am also a member of the Berlin Mathematical School, part of the Math+ Excellence Cluster.

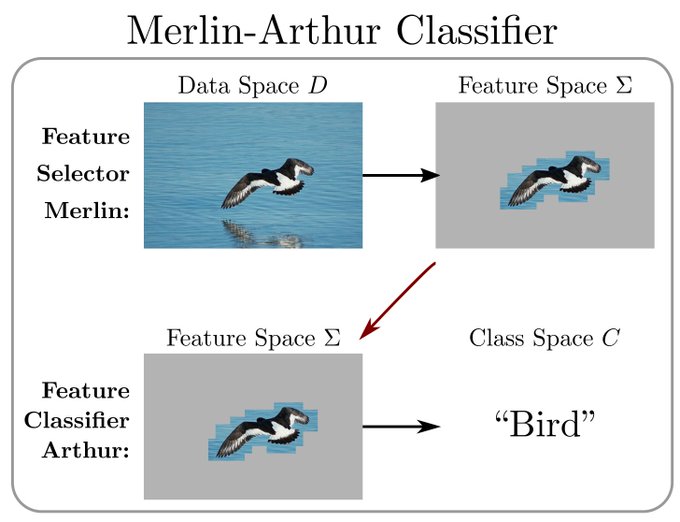

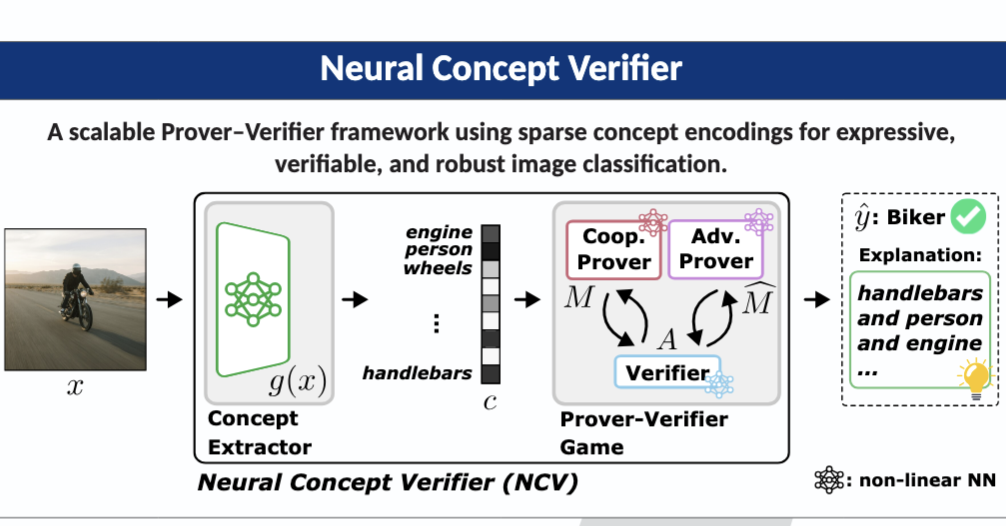

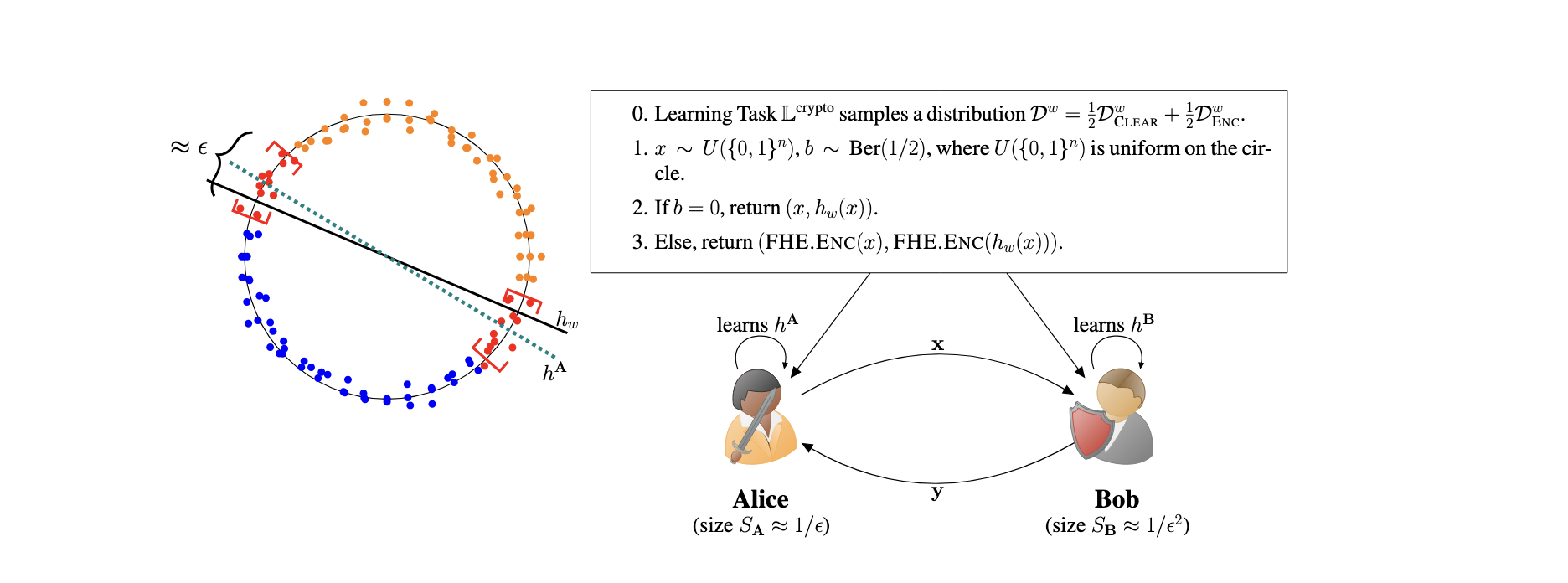

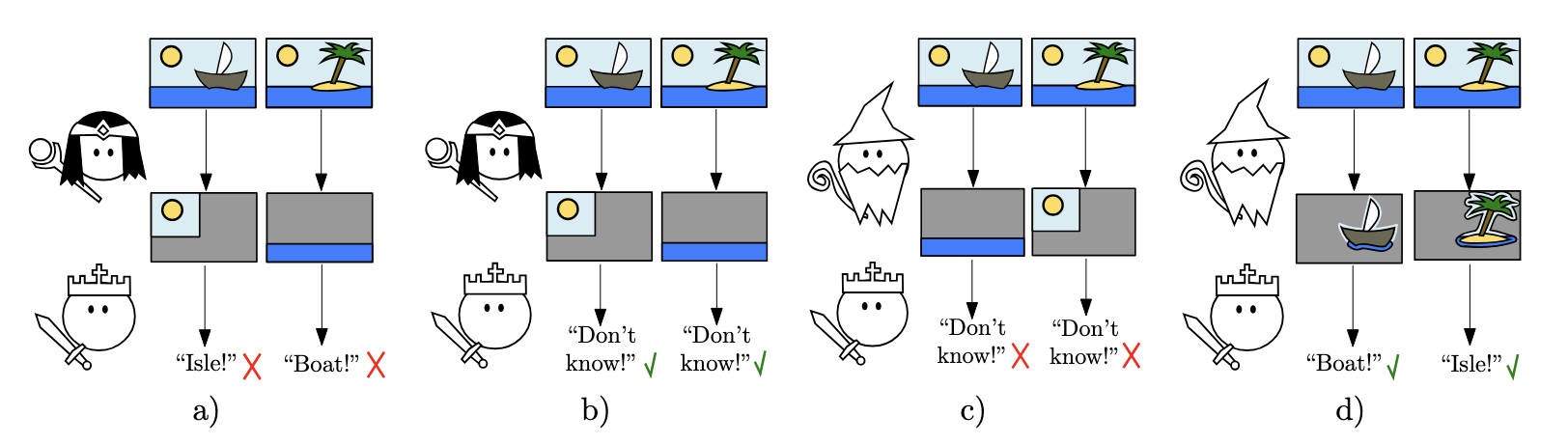

I work on Prover-Verifier Games for Trustworthy ML. The idea: build multi-agent models (Merlin-Arthur classifiers, concept-based verifiers) where the evidence behind a prediction can be formally checked, not just eyeballed. I also study the theoretical limits of model security, from adversarial robustness and backdoor watermarks to transferable attacks, and how these connect to cryptography.

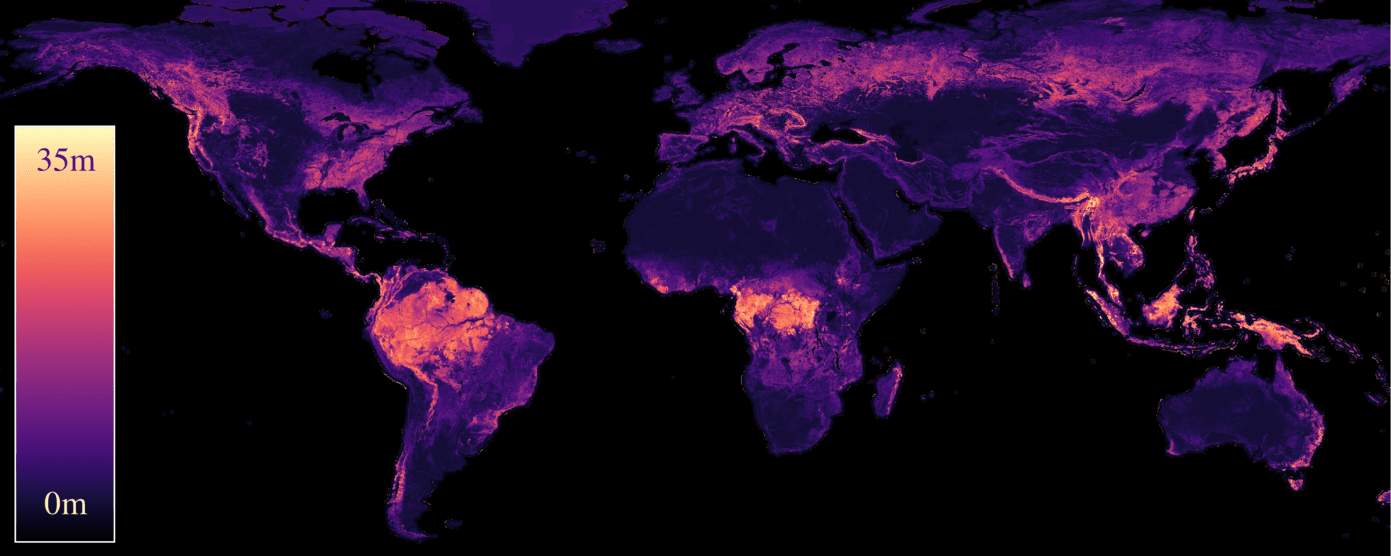

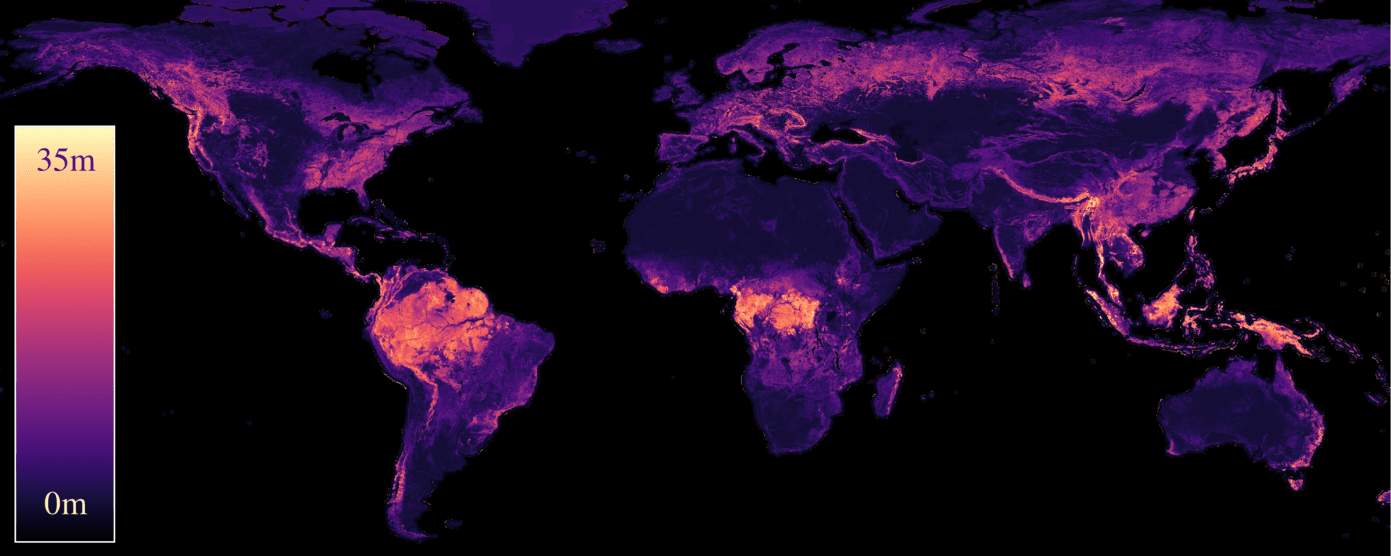

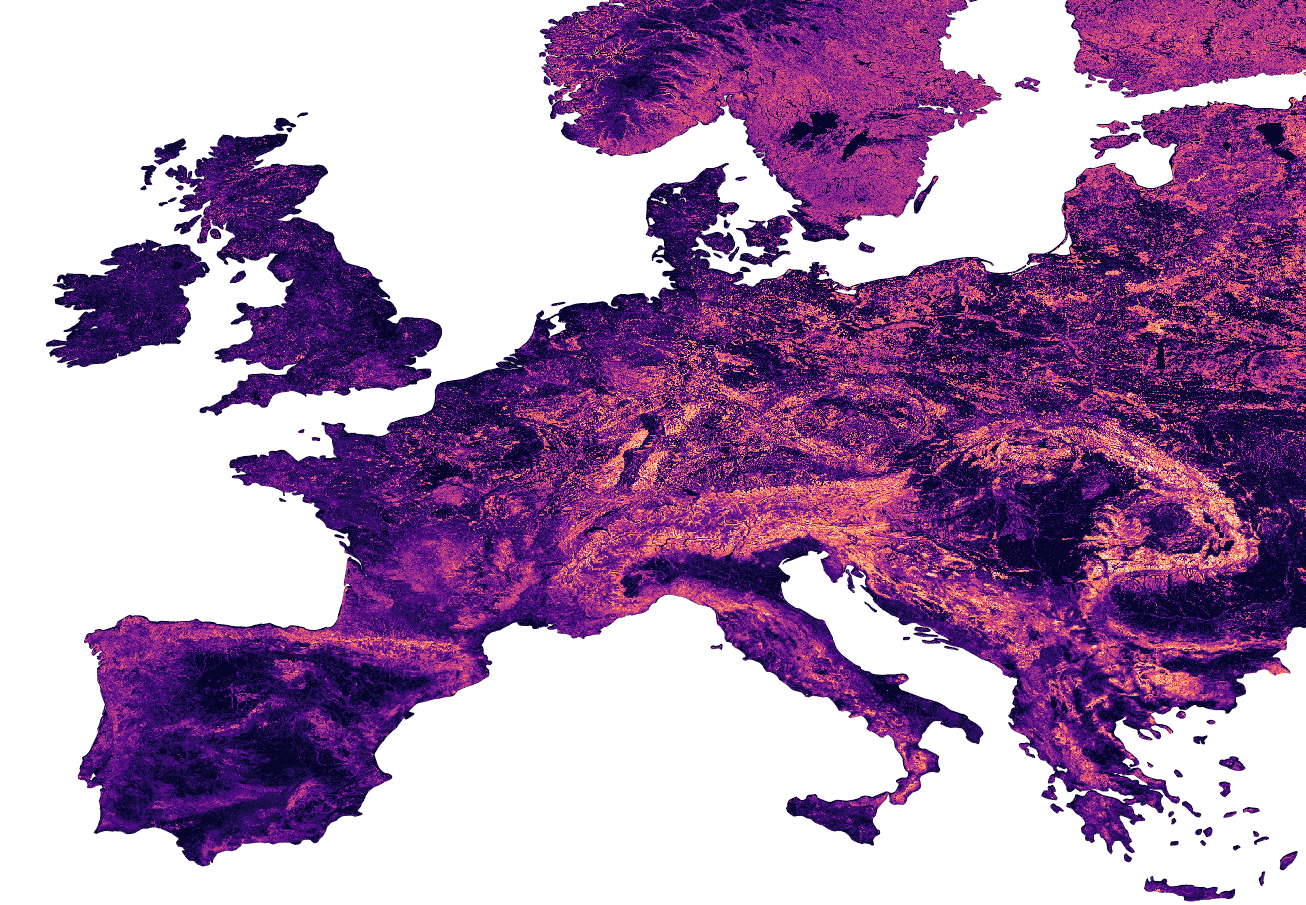

On the applied side, I work on ML for Earth observation as part of the AI4Forest project. We build scalable pipelines that turn satellite data (Sentinel-1/2, GEDI) into high-resolution canopy height maps and biomass estimates, enabling large-scale forest monitoring.

Before my PhD, I worked on hybrid discriminative-generative models, studying how VAEs and ResNets can be combined for out-of-distribution detection and robustness in computer vision.

If any of this sounds interesting, feel free to reach out. I am always happy to chat about research, potential collaborations, or new ideas.

Research Highlights

news

| 05/2026 | Neural Concept Verifier accepted as a spotlight at ICML 2026 (top 2.2% of 23,918 reviewed submissions). We scale Prover-Verifier Games to high-dimensional inputs via concept encodings for verifiable AI. Joint work with S. Asadulla, D. Steinmann, K. Kersting, W. Stammer, and S. Pokutta. |

|---|---|

| 02/2026 | New preprint: ECHOSAT: Estimating Canopy Height Over Space And Time is out on arXiv. We built the first globally consistent temporal tree height map at 10m resolution. Turns out you can watch forests grow (and burn) from space. Joint work with J. Pauls, K. Schrödter, S. Ligensa, M. Schwartz, M. Zimmer, S. Saatchi, S. Pokutta, P. Ciais, and F. Gieseke. |

| 09/2025 | The Good, the Bad and the Ugly got accepted at NeurIPS 2025. We show that for almost every learning task, either a watermark or an adversarial defense must exist. Joint work with Grzegorz Głuch, Sai Ganesh Nagarajan, and Sebastian Pokutta. |

| 06/2025 | Neural Concept Verifier accepted at the ICML 2025 Workshop on Actionable Interpretability. We combine Prover-Verifier Games with concept encodings to get verifiable predictions on high-dimensional inputs. With S. Asadulla, D. Steinmann, K. Kersting, W. Stammer, and S. Pokutta. |

| 05/2025 | Our work on Capturing Temporal Dynamics in Large-Scale Canopy Tree Height Estimation got accepted at ICML 2025. First 10m resolution temporal canopy height map of Europe. With J. Pauls, M. Zimmer, S. Saatchi, P. Ciais, S. Pokutta, and F. Gieseke. |

| 03/2025 | The Good, the Bad and the Ugly accepted at the ICLR 2025 Workshop on GenAI Watermarking. |

| 10/2024 | Excited to announce that The Good, the Bad and the Ugly: Watermarks, Transferable Attacks and Adversarial Defenses is now available on arXiv! Many thanks to my collaborators, Grzegorz Głuch (EPFL at the time), Sai Ganesh Nagarajan (ZIB) and Sebastian Pokutta (ZIB), for their contributions to this project! |

| 06/2024 | Unified Taxonomy of AI Safety: Watermarks, Adversarial Defenses and Transferable Attacks got accepted at ICML 2024 Workshop on Theoretical Foundations of Foundation Models. See you in Vienna! |

| 03/2024 | Our recent paper, Interpretability Guarantees with Merlin-Arthur Classifiers, has been accepted at AISTATS 2024. Looking forward to meeting you in Valencia. |

| 07/2023 | I received the Best Proposal Award at the xAI-2023 Doctoral Consortium in Lisbon for my research on Extending Merlin-Arthur Classifiers for Improved Interpretability. Thank you to the reviewers and organizers! |

| 09/2022 | Excited to have started my PhD at TU Berlin and the Zuse Institute Berlin in the Interactive Optimization and Learning research lab, under the supervision of Sebastian Pokutta. |

selected publications

-

Preprint

Preprint -

ICML 2025Capturing Temporal Dynamics in Large-Scale Canopy Tree Height EstimationIn International Conference on Machine Learning (ICML), 2025* Equal contribution

ICML 2025Capturing Temporal Dynamics in Large-Scale Canopy Tree Height EstimationIn International Conference on Machine Learning (ICML), 2025* Equal contribution -

Doc. Consortium

Doc. Consortium